If you have an OTT subscription, you may have seen the popularity of Korean shows like Squid Game and the Spanish show Narcos. Both of these chartbuster shows were dubbed and subtitled in several languages, making them a huge worldwide success. The video content is now getting localized to reach a new audience and cover the globe. With the rise in internet connectivity and rapid video content consumption, living rooms are not the only place to watch. Most of the crowd has now become habitual in watching videos with closed captions vs subtitles on social media because of preference, noisy environment, or even hearing problems. Without using headphones, people can watch videos.

Table Of Content:

- What are Captions?

- How Do Closed Captions Work?

- What are Subtitles?

- Difference Between Closed Captions and Subtitles

- How Closed Captions vs Subtitles Terminology varies with Countries

- Importance of Multi-Language Captions and Subtitles

- Need for Captions and Subtitles

- Subtitle and Caption File Formats

- How to Upload your Subtitles and Captions for Hosted Videos

- FAQs

Many even think subtitles and closed captions are the same things, but there is a difference. Captions are designed to provide access to persons who are hard of hearing or deaf, while subtitles translate foreign language dialogues. The blog presents a deeper understanding of closed captions, their types, subtitles, and the difference between them.

What are Captions?

Captions are the textual representation of the audio content of a video, television broadcast, live event, or production about everything, whether it is eCommerce in Australia or else. The dialogue and all associated sound effects are displayed on the screen along with the video in real time. Captioning helps people who are deaf or hard of hearing get the complete essence of what is happening in the video. In the 1970s, captions were introduced for television and were later made mandatory under the law.

Explore More ✅

VdoCipher ensures Secure Video Hosting with Hollywood Grade DRM Encryption

VdoCipher helps 2500+ customers over 120+ countries to host their videos securely, helping them to boost their video revenues.

Types of Captions

Commonly, captions are of two types, Open Captions, and Closed Captions.

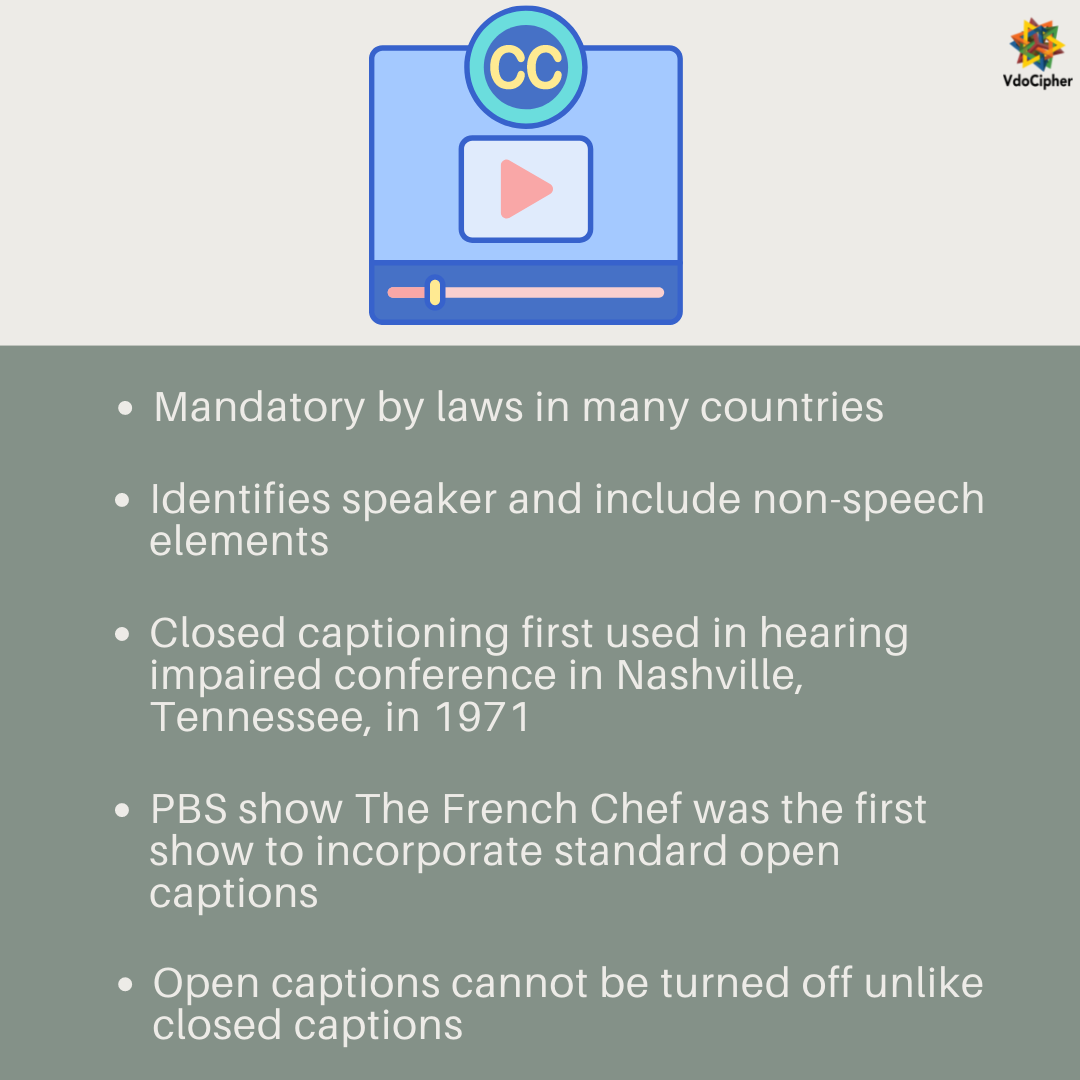

Open captions were used in the initial years of captioning. The open captions were ‘burned-in’ or hard-coded or embedded directly into the video and cannot be turned off. These captions are mostly used when it’s an absolute necessity, limiting the viewer’s choice. A movie theatre is an example where open captioning might be used; otherwise, those having hearing problems can miss out on the dialogue. In the 1970s, open captioning in broadcasts began with PBS’s The French Chef. Commonly used in social media videos or offline videos.

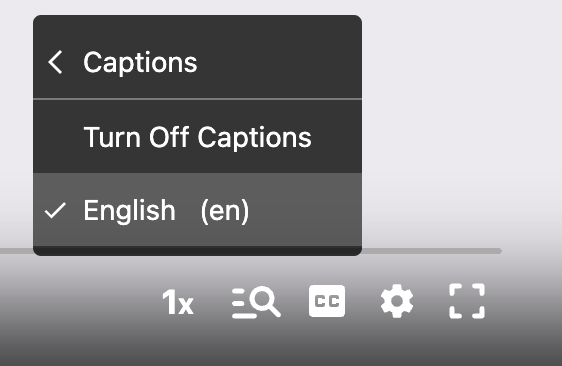

The most popular type of captioning is closed captioning. Here, the captions can be turned on and off as per viewer’s discretion. The captions are not visible until the user turns them on. Some remote controls have a caption button for better control.

The symbol for the closed caption is two C’s – [CC] which you find in the lower bottom of the video screen.

How Do Closed Captions Work?

The closed captions are embedded in the video signal and in line 21 of the vertical blanking interval. It is the section of the television signal that tells the electron gun to shoot back up to the upper screen’s left corner to start painting the next frame. Line 21 in the blanking interval region is assigned to captioning and also V-chip and time information. The closed captions are visible to the viewer when decoded by a decoder, built into the television, or using separate decoder.

During live events like the broadcast of live news on television, closed captioning becomes somewhat more challenging. After the words have been spoken, closed captioning is delivered within seconds delay. This is done by excellent stenographers who listen to the live broadcast and put down the words which are captioned to the television screen.

What are Subtitles?

Simply put, subtitles are transcription or translation of the video dialogue when sound is available but not understood by the viewer. An example is a video played in a foreign language. Although subtitles look similar to closed captions, the former provides a text form of the video dialogue with the assumption that the viewer can hear. They are categorized as intralingual subtitles (same language) and interlingual subtitles (different language).

Nowadays, many platforms combine closed captions and subtitles under the category ‘Subtitles for the deaf or hard-of-hearing (SDH).’

- SDH subtitles are intended for deaf or hard-of-hearing viewers, unlike subtitles. SDH includes additional information, such as speaker tags, sound effects, and other elements outside of the speech. They usually appear centered in the lower bottom third of the screen.

- For example, SDH subtitles will indicate audio elements such as music, sneezing or laughter. Similar to subtitles, SDH also runs simultaneously with the audio or video file.

- SDH subtitles support encoding through HDMI (High Definition Multimedia Interface) as they are encoded as a series of pixels. Closed Captions do not support HDMI encoding as encoded in text and commands.

Difference Between Closed Captions and Subtitles

Usually, people consider closed captions and subtitles as the same, but there is a distinction.

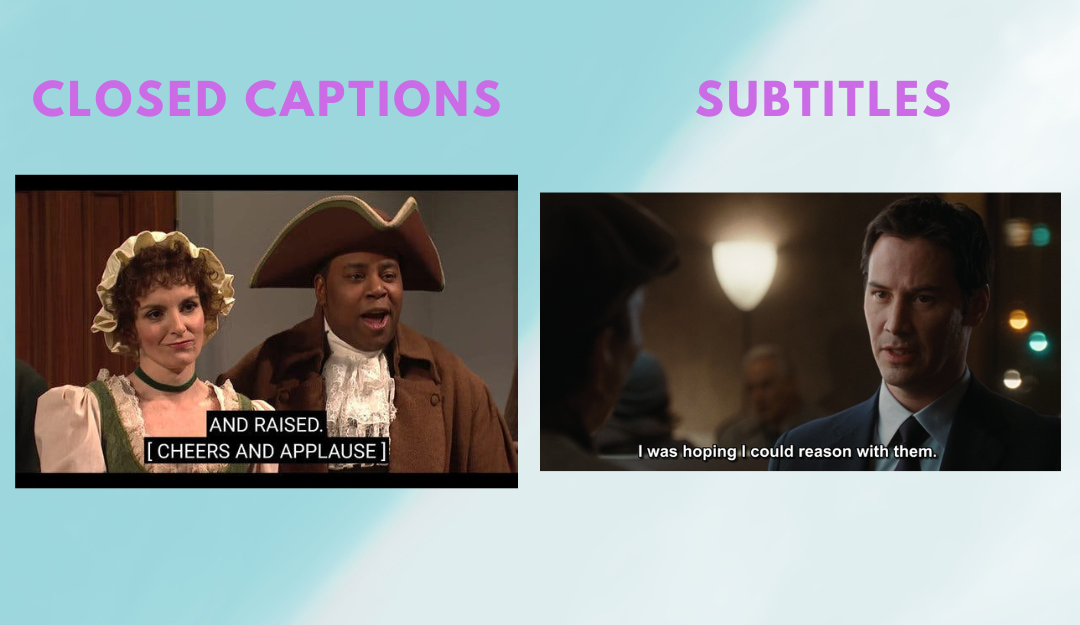

Both closed captions and subtitles are the text versions of the audio spoken in the video. Closed captions are created for people who are deaf or hard of hearing. Hence, closed captions include all the audio sounds, including background sound, sound effects, speaker changes, and all relevant sounds.

Subtitles, unlike closed captions, are created to translate the video audio into an alternate language the viewer understands. For example, a viewer who does not understand Spanish can easily watch the video with English subtitles. You may have seen movies shot in French and screened in a Hindi-speaking country with subtitles.

Closed captions more accurately convey what is going on on-screen, while subtitles are a meaningful way to translate the dialogue into another language. In both of them, the text on the screen is synchronized with the words spoken in the video.

| Closed Captions | Subtitles |

| Usually for viewers who are deaf or hard of hearing | Usually for viewers to watch content in foreign language |

| Includes all relevant background sound descriptions | Only verbal sounds and text alternative of dialogues |

How Closed Captions vs Subtitles Terminology varies with Countries

The meaning of subtitles and captions changes with countries. In the regions of Canada and the United States, both terms have different meanings. Subtitles are for those who can hear but cannot understand the language or when the audio is not clearly audible. Closed captions, on the other hand, are for those who are deaf and hard of hearing and include spoken dialogues and music, and sound effects.

In the UK, Ireland, and many other countries, both terms are collectively called subtitles. While for those hard of hearing, captioning becomes ‘subtitles for the deaf and hard of hearing (SDH).’

In New Zealand, an ear icon with a line represents subtitles for the hard of hearing, now referred to as captions.

Importance of Multi-Language Captions and Subtitles

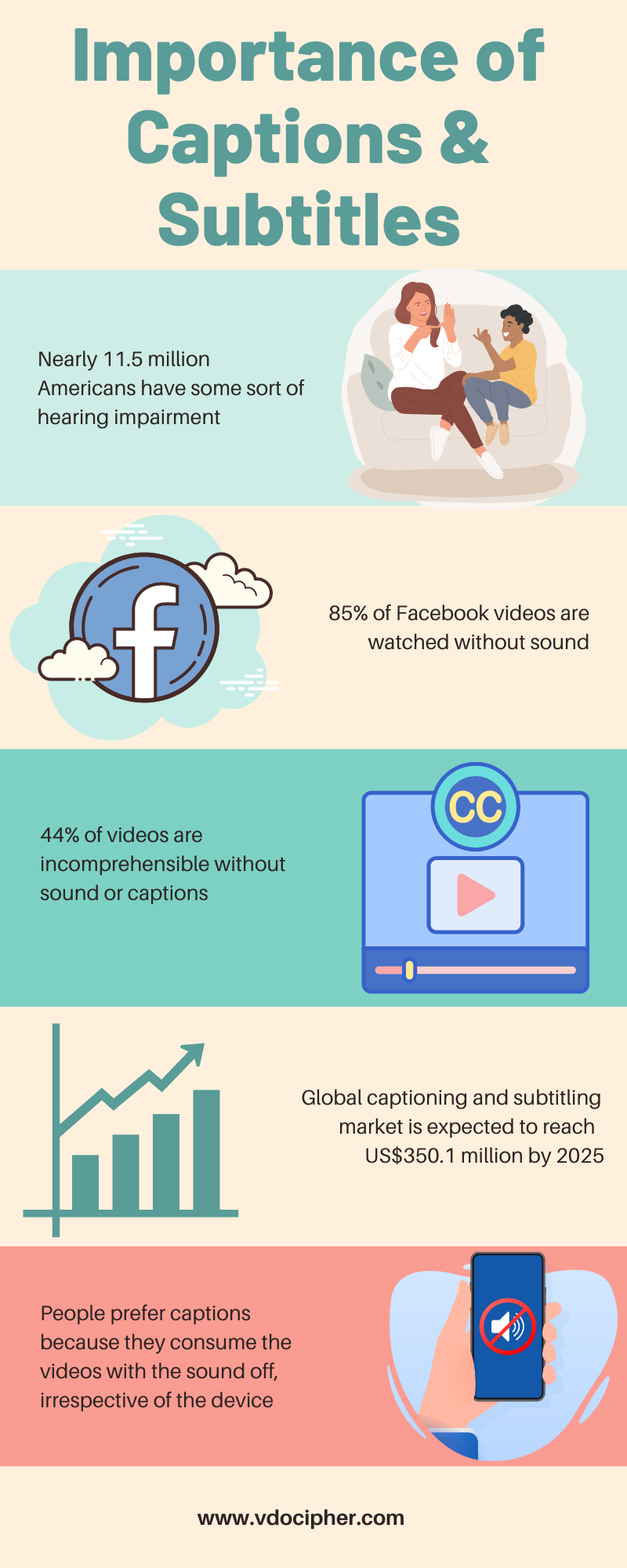

The global Captioning and Subtitling Solution market size is projected to reach US$ 476.9 million by 2028, from US$ 282 million in 2021, at a CAGR of 7.7% during 2022-2028.

The key drivers of the increase in the growing demand are the surging popularity of video content and the rise of streaming platforms like Netflix and Amazon Prime with multi-lingual subtitle support. These platforms, media, and all video production-related industries use multi-language subtitles and captions to reach wider audiences and make content accessible across the globe irrespective of language barriers.

Not only captions and subtitles are for those hard of hearing or non-native speakers, but they also help those who have slow-paced verbal communication or have a noisy background. Viewers can choose their language and multilingual subtitles make the content go global, breaking the language constraints.

Internet penetration has allowed worldwide access to videos on various channels. Everything from social platforms to eLearning courses is readily available in video format. Creators cannot create videos multiple times in different languages instead, videos have subtitles attached to them. Search engines like Google and YouTube use accompanying text with the video to index them.

Parasite director Bong Joon-ho in 2020 Golden Globes acceptance speech – ‘Once you overcome the one-inch tall barrier of subtitles, you will be introduced to so many more amazing films’

Need for Captions and Subtitles

Improves Accessibility for people

According to the World Health Organization, by 2050, nearly 2.5 billion people are projected to have some sort of hearing disability. Currently, more than 5% of the global population has a hearing loss disability. With such a growing population of hard-of-hearing and deaf individuals, adding captions and subtitles increases accessibility. The people will leave the video or website where it is hosted if the video content is not accessible to them.

According to The Americans with Disabilities Act (1990), civil rights law prohibits discrimination against persons with disability. Although ADA does not explicitly mention online video captioning, numerous lawsuits set legal clauses on video accessibility. The 2012 lawsuit, National Association of the Deaf v. Netflix, categorizes Netflix as a ‘place of public accommodation’ and hence requires closed captioning.

Language barrier

Providing subtitles is considerably beneficial to reach wider audiences all over the world. According to Statista, in 2022, nearly 1.5 billion people worldwide will have English as their native or second language, just more than 1.1 billion Mandarin Chinese speakers. Being multicultural, Spanish is the second most common language spoken in the United States. With such a diversity of languages, you are most likely to utilize the full potential of your content with subtitling.

Retains Viewership

Closed captioning helps to watch videos in sound-sensitive environments. In today’s time, whether commuting on public transport or keeping the content volume restricted, closed captioning is of great importance. Even when people are watching videos, they are likely to read captions if the speech rate is high or some words are unclear or unknown. It makes the video meaningful even with no sounds. It increases the retention rate and the average watch time.

Improve seo

When you host videos on Google, YouTube, or Facebook, the algorithm indexes them through the text associated with them. Also, search engines treat each language as a separate result, hence videos with SRT (SubRip Subtitle file) translations have higher search rankings. The plain-text SRT files contain important information about subtitles, (timecodes of your text to check the compatibility match of subtitles with your audio), and the sequential number of subtitles.

Improves language learning

For most of us, watching foreign language videos helps us get insight into the language. It helps gain proficiency in learning words, conversations and colloquialisms. Subtitles displayed on the screen along with the video further improve your vocabulary. In addition to the words, you get a visual element associated with them which makes you learn and remember them for long.

Subtitle and Caption File Formats

The file format of captions and subtitles depends on the website your videos are hosted on. SubRip Video Subtitle Script (*.srt) , WebVTT (*.vtt), Sonic Scenarist Closed Caption (*.SCC), Flash XML in Timed Text Authoring Format DFXP (*.xml) or (*.dfxp), PBS COVE (*.sami) and many more. The most popularly used formats for video streaming are .srt and .vtt.

Explore More ✅

Protect Your VOD & OTT Platform With VdoCipher Multi-DRM Support

VdoCipher helps several VOD and OTT Platforms to host their videos securely, helping them to boost their video revenues.

SRT File Format

SRT stands for SubRip Text and is the most basic and easy-to-use subtitle format. It was initially used to extract captions and subtitles from media files using DVD-ripping software, hence the name. It contains a sequential number of subtitles, start and end timestamps, and subtitle text. Before the SRT Text format, caption files used XML-based code, which was clunky.

- A numeric counter indicating the number or position of the subtitles

- The start and end time of the subtitle separated by –> characters

- Subtitle text in one or more lines.

- A blank line indicating the end of the subtitle.

VTT File Format

The next most popular caption and subtitle file format is Web Video Text Tracks (WebVTT). The main differentiating factor between vtt file format and srt is that the former can include metadata while srt can’t. The metadata in your subtitle helps promote online visibility. VTT format was initially created in 2010 with the idea of having srt as the base and enabling HTML5 code functionalities. The features include font, coloring, text formatting, and movements across the video.

- Chapters for content navigation

- Text video descriptions

- Other metadata aligned with audio or video content

How to Upload your Subtitles and Captions for Hosted Videos

According to multiple Video Hosting providers, content creators are nowadays more focussed towards the security of their video content and advanced player integration. An advanced player is the only medium through which your content can be synchronized with the attached captions. Thus it is the responsibility of the hosting provider to provide necessary functionality with the player to search and attach multiple language subtitles and captions.

Advanced hosting providers not only provide you with dashboard and APIs to attach subtitles and captions files but also DRM encryption. For example, VdoCipher provides APIs and plugins for easy integration and also you get a fully versatile dashboard for manual attachment of subtitles and captions files. The dashboard also contains security, access management, player customization, analytics and other important features required for a professional video creator.

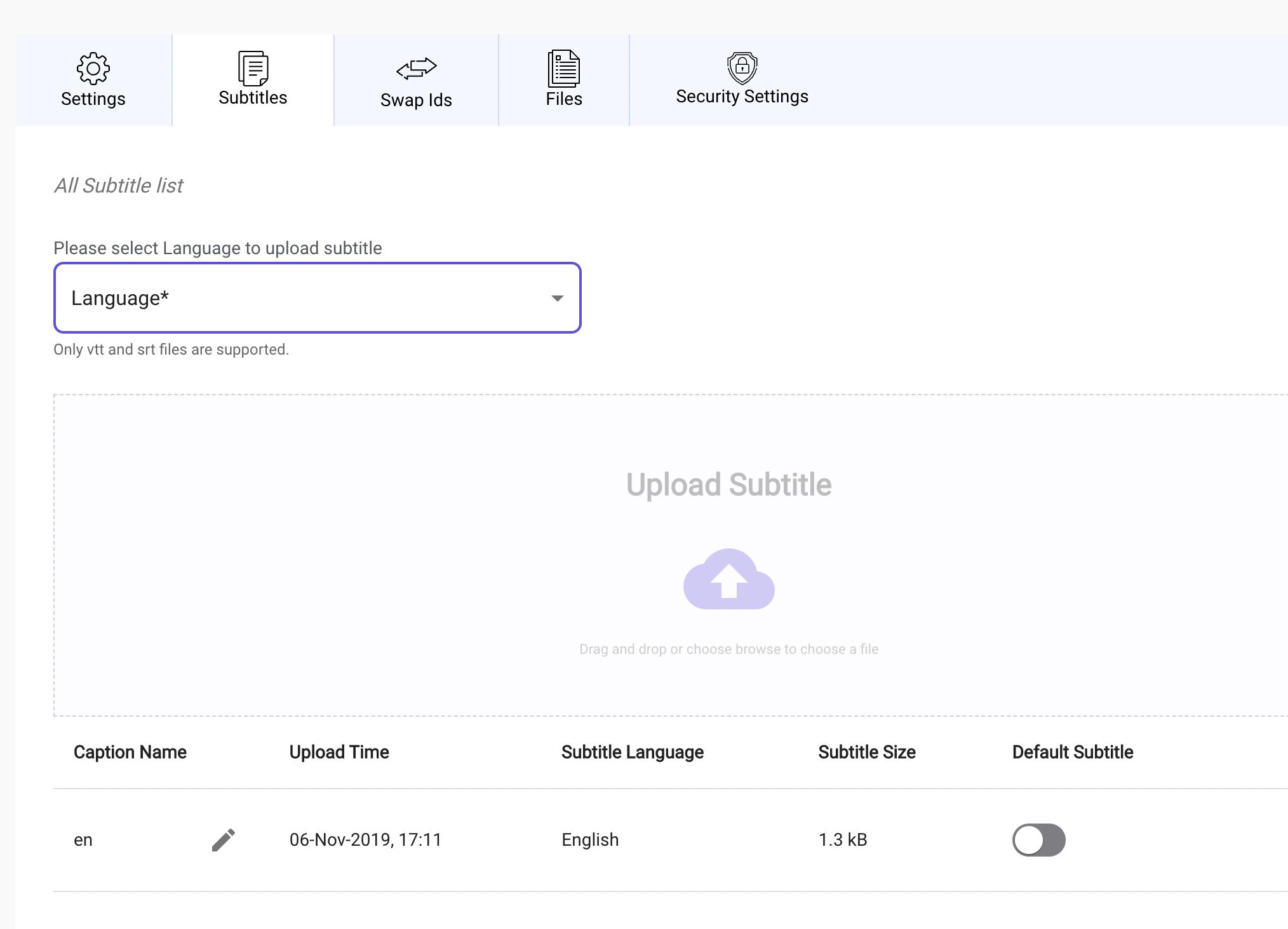

To add single or multi language subtitles or captions through VdoCipher dashboard, you can follow the following steps.

- Sign in to VdoCipher Dashboard.

- Click on the edit button of the video where captions/subtitles are to be added.

- Now click on the subtitles tab.

- Select your desired language and upload the respective VTT or SRT file.

- This will attach the file and can be turned On during playback for that video.

Note: other video platforms like YouTube also have similar steps for uploading captions with minor differences in selection via dashboard.

You can read more about how to add subtitles to a video on one of our other blogs in more detail.

Closed Captions vs Subtitles

Closed captions and subtitles are two types of text that can be added to video content to help viewers understand what is being said on-screen. Closed captions are designed to provide a complete textual representation of the audio, including background sounds and speaker changes, making them ideal for viewers who are deaf or hard of hearing. Subtitles, on the other hand, assume that the viewer can hear the audio and only provide a textual representation of the spoken dialogue. As a result, subtitles may not include important contextual information like sound effects or speaker identification. Both closed captions and subtitles can be useful for viewers who are not fluent in the language of the video, but closed captions are typically preferred for accessibility purposes.

FAQs

How to turn off closed captions on Android?

-

- There are two ways of turning off closed captions on Android.

- Go to the watch page of any video.

- Tap the video player once to show options, then tap cc to turn on Captions.

- To turn off Captions, tap cc again.

Or

- On an Android device, go to settings.

- Additional settings<Accessibility<Caption preferences (under hearing) – toggle

- There are even options to change caption size, style, and language preferences.

Can Captions and subtitles be downloaded on YouTube?

Yes, YouTube videos with subtitles attached to them can be downloaded as text. It could help in offline viewing, research or studying.

How to put closed captions on Netflix?

Open Netflix. Select the Audio & Subtitles icon at either the bottom or top of the screen. Choose from the languages or select Other to see all language options based on location and language settings.

Supercharge Your Business with Videos

At VdoCipher we maintain the strongest content protection for videos. We also deliver the best viewer experience with brand friendly customisations. We'd love to hear from you, and help boost your video streaming business.

Jyoti began her career as a software engineer in HCL with UNHCR as a client. She started evolving her technical and marketing skills to become a full-time Content Marketer at VdoCipher.